|

4/9/2023 0 Comments Webscraper duplicates

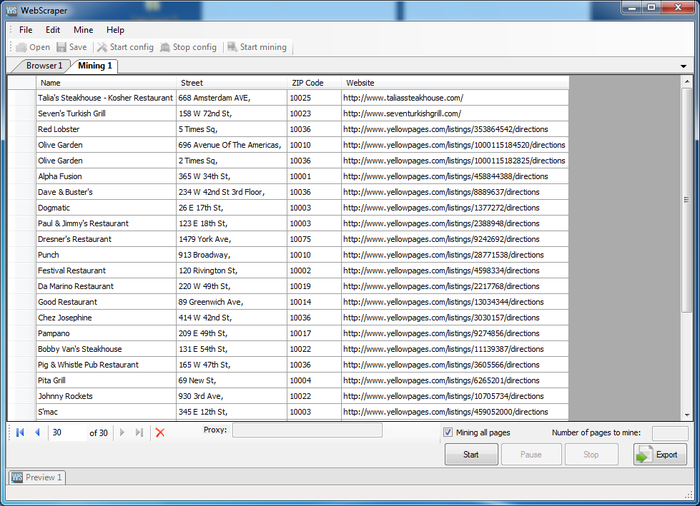

for example you may want to use proxies or custom headers: proxies = result = scraper. You can also pass any custom requests module parameter. Note that you should update the wanted_list if you want to copy this code, as the content of the page dynamically changes. build ( url, wanted_list ) print ( result ) Say we want to scrape live stock prices from yahoo finance: from autoscraper import AutoScraper url = '' wanted_list = scraper = AutoScraper () # Here we can also pass html content via the html parameter instead of the url (html=html_content) result = scraper. get_result_similar ( '' ) Getting exact result Now you can use the scraper object to get related topics of any stackoverflow page: scraper. wanted_list = scraper = AutoScraper () result = scraper. # You can also put urls here to retrieve urls. Say we want to fetch all related post titles in a stackoverflow page: from autoscraper import AutoScraper url = '' # We can add one or multiple candidates here. Install latest version from git repository using pip:.Then you can use this learned object with new urls to get similar content or the exact same element of those new pages.

It learns the scraping rules and returns the similar elements. This data can be text, url or any html tag value of that page. It gets a url or the html content of a web page and a list of sample data which we want to scrape from that page.

This project is made for automatic web scraping to make scraping easy. AutoScraper: A Smart, Automatic, Fast and Lightweight Web Scraper for Python

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed